Interpreting Supplement Research

Research findings about supplements are frequently simplified, overstated, or applied in ways the original studies didn't support. A supplement study that shows a promising result in one population under specific conditions may tell you very little about whether that supplement is right for your situation. Understanding how to read and interpret evidence — not just whether a study exists — is one of the most practical skills in supplement decision-making. This page focuses on that: not technical methodology, but how to evaluate what a finding actually means.

Why Interpretation Matters

- Results don't automatically transfer. Study findings depend on the population studied, the dose used, and the conditions under which the research was conducted. A result in one context does not reliably predict outcomes in another.

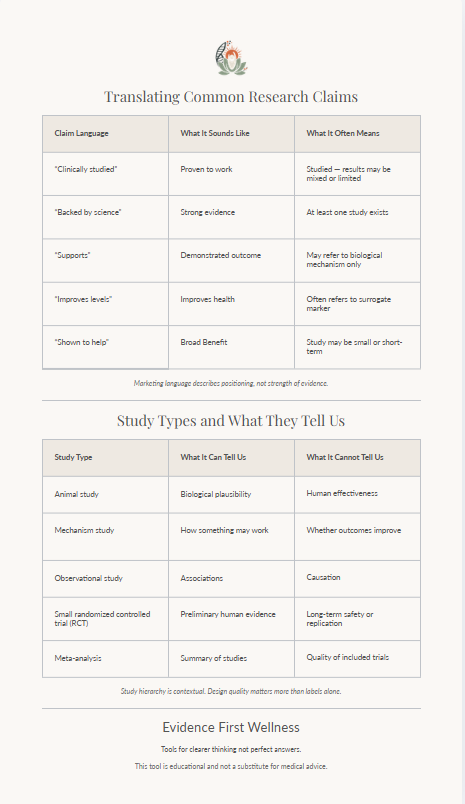

- Headlines routinely simplify. Findings are compressed into shareable claims — often stripping out the qualifications, limitations, and uncertainty that make a result meaningful. Marketing language does this even more aggressively.

- Applied incorrectly, evidence misleads. Knowing that a study exists is not the same as knowing what it shows. Interpretation determines whether evidence is used appropriately or becomes the basis for a decision it doesn't actually support.

How to Interpret Research

Look at Who Was Studied

The population in a study shapes what its findings mean. Research conducted in adults with a documented deficiency may not apply to healthy children, and vice versa. Age, health status, baseline nutrition, and life stage all affect how a supplement behaves.

Check the Dose and Form

Studies use specific doses and specific ingredient forms. If a product uses a different dose or a different form than what was studied, the research does not directly support that product — even if the ingredient name is the same.

Distinguish Statistical from Practical Significance

A statistically significant result means the finding is unlikely to be due to chance. It does not mean the effect is large enough to matter in practice. Small but measurable differences in a controlled study may have little real-world relevance.

Consider Study Duration

A study lasting four weeks may show short-term effects that don't persist. Longer-term outcomes — or whether benefits require sustained use — are often unknown unless specifically studied. Duration matters for understanding how findings apply to ongoing use.

Look for Replication, Not Single Studies

A single well-designed study provides a data point, not a conclusion. Confidence in a finding increases when multiple independent studies, using different populations and methods, arrive at consistent results. Single studies — even strong ones — should be held lightly.

Note Who Funded the Research

Industry-funded studies are not automatically invalid, but funding source is relevant context. Research funded by a manufacturer has a documented tendency toward more favorable outcomes. Independent replication of those findings carries more weight.

Common Misinterpretations

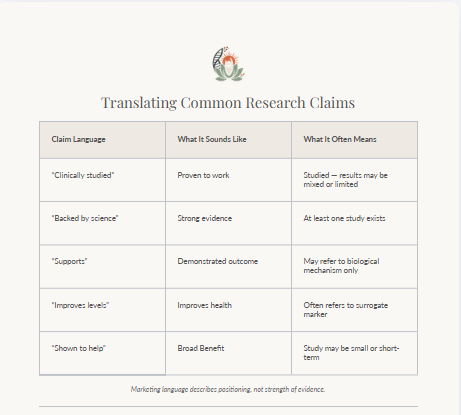

- Association treated as causation. Observational studies can show that two things occur together — they cannot establish that one causes the other. Many supplement claims rest on associations that have not been confirmed in controlled trials.

- "Clinically studied" read as proven. Having been studied is not the same as having demonstrated clear benefit. A study may show no significant effect, or may have been too small or too short to be meaningful. The claim that something is "clinically studied" says nothing about what the research found.

- Results applied across populations. Research findings from one group are routinely extended to others without evidence. Studies in adults are applied to children; studies in people with a deficiency are applied to those without one. This kind of extrapolation should be treated with caution.

- Single studies treated as settled. One study — even a well-designed randomised controlled trial — does not confirm or refute a hypothesis. Findings need to hold up across multiple studies before they can be considered reliable evidence.

What This Means in Practice

The Supplement Tradeoffs Guide

A printable, one-page framework for applying structured thinking to real supplement comparisons — using the same evaluation approach described across this site.

A preview of the Supplement Tradeoffs Guide — available as a free PDF.

Download the Tradeoffs Guide

A structured, printable framework for comparing supplements using the evidence-first approach.

Download the GuidePDF · Free · No sign-up required

This page is part of the evidence-first approach to supplement evaluation used across this site — focused on applying structured thinking to complex information, not on simplifying it beyond what the evidence supports.